The $400 Billion Lesson: Why Data Monetization Fails and What Two Decades of Telematics Teaches Us About Capturing IoT Data Value

Every company that you evaluate that is generating sensor data. Every construction equipment manufacturer that is retrofitting fleets with telematics. Every insurance carrier is sitting on petabytes of driving data, desperately trying to find a signal in the noise. They all face the same fundamental paradox: they are drowning in data, yet they are starving for value.

The global insurance market addressable by telematics data is projected to reach between $330 billion and $400 billion by 2033. It represents the single most mature dataset in the Internet of Things (IoT) ecosystem. Yet, according to Gartner, 80% of companies attempting to monetize IoT data will fail.

The IoT Paradox

The global insurance telematics market is projected to reach $400 billion. Yet, according to Gartner, 80% of companies attempting to monetize IoT data will fail.

These failures rarely stem from a lack of data availability. The data demonstrably transforms operations when applied correctly. Nor do they stem from technological incapacity; we possess the ability to process petabytes of streaming information in near real-time. Rather, these failures are structural. They occur because founders and investors fundamentally misunderstand the physics of data value creation.

After processing billions of miles of telematics data and observing the lifecycle of hundreds of companies – from unicorn exits to quiet bankruptcies – distinct patterns emerge. Whether a company is attempting to monetize vehicle data, industrial sensor readings, or agricultural IoT streams, the same principles determine the outcome. The telematics market offers two decades of expensive, hard-won lessons that every data-driven venture must understand to avoid becoming a statistic in the next cycle of capital destruction.

The Great Misdirection: Data Is Not the Product

The most pervasive fallacy in the IoT economy is the belief that data is the product. It is not.

Data is a raw material. It is unprocessed inventory – costly to store, unrefined, and useless in its natural state. It only accrues value when it is refined and transformed into specific outcomes for specific customers who are experiencing specific pain points.

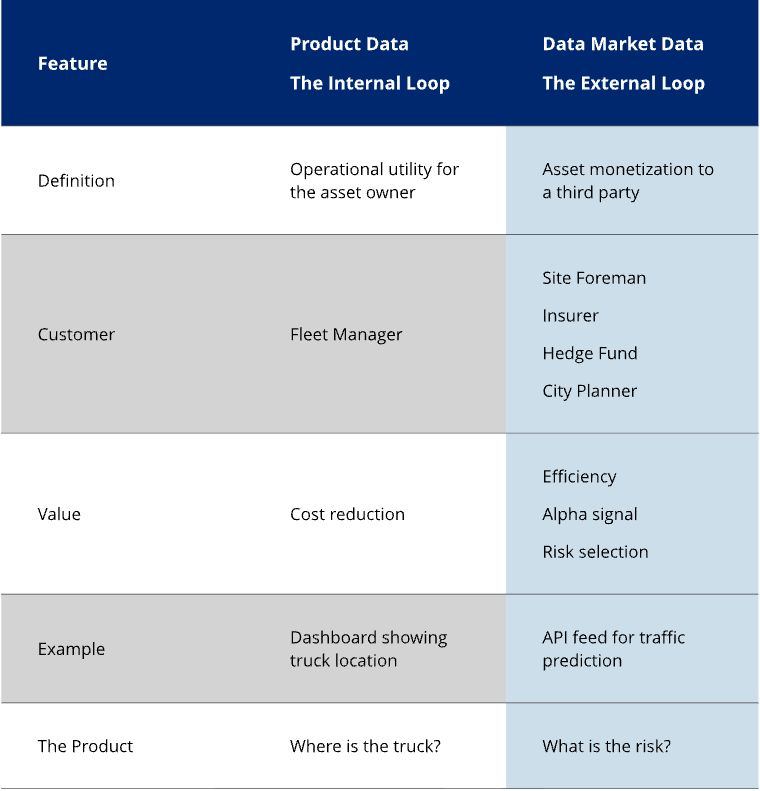

To navigate this landscape, we must draw a sharp strategic line between Product Data and Data Market Data.

Product Data (The Internal Loop) is an operational utility. It is the dashboard a fleet manager uses to route trucks or the alert a foreman uses to schedule maintenance. It is a feature of the hardware, designed to improve the asset operator’s own P&L.

Data Market Data (The External Loop) is asset monetization. This is data extracted from the operation and sold to a third party – such as an insurer or traffic planner – to build a new product that is not developed by the raw telematics data supplier and does not exist within the fleet’s operation.

The fundamental error most ventures make is attempting to sell Product Data (raw reports and dashboards) to Data Market buyers. Insurers and hedge funds do not want reports; they want the signal to build their own derived products.

The “Product Data” vs. “Data Market” Framework

Watch how this confusion plays out identically across industries:

1. The Transportation “Dots on a Map” Fallacy

For years, we operated under the assumption that the product was GPS coordinates and speed metrics. We sold “dots on a map.”

But the flaw in this model was exposed by a common call to our sales team: “Thanks to your system, I identified the bad drivers and fired them. The rest are scared. I’m keeping the box in the truck as a dummy deterrent, but I’m cancelling the subscription.”

The business model collapsed because we solved a finite problem with a recurring fee.

To survive, the industry had to pivot to problems that never end. Fleet managers do not buy data. They do not want another dashboard.

They buy risk mitigation. They buy video telematics to exonerate drivers and avoid lawsuits. They buy safety tools to reduce accident frequency and lower insurance premiums. They buy the ability to keep their drivers on the road and their assets out of the courtroom.

They buy operational efficiency. They buy maintenance schedules that prevent costly roadside breakdowns. They buy engine diagnostics that predict failure before it happens. They buy routing that shaves miles off a delivery route. They buy electronic logging to meet hours of service requirements.

When fuel represents 25% of a fleet’s operating costs, even a modest data-driven improvement delivers substantial P&L impact. The data was always valuable, but the “product” – raw visibility – was not. By failing to translate raw signals into financial outcomes, early providers saw their value propositions erode into commoditization.

2. The Construction Utilization Gap

In the heavy equipment sector, manufacturers spent years installing sensors that tracked every hydraulic pressure variance and engine parameter. They offered this data to contractors, who largely ignored it. Why? Because a site foreman does not have the time to interpret hydraulic pressure charts.

However, when the industry reframed the data around utilization – specifically addressing the fact that heavy equipment utilization averages only 50%-70% – the market shifted. The product became “reducing idle time by 20%.” The data remained the same, but the translation of that data into an operational directive created a billion-dollar efficiency market.

3. The Agricultural Yield Revolution

John Deere does not sell soil moisture readings or GPS coordinates to farmers. If they did, they would be competing with free weather apps. Instead, they sell yield improvements.

By integrating sensor data into precision application machinery, they allow farmers to reduce fertilizer and pesticide inputs while increasing output. The result is a yield improvement up to 15%. The sensor data is merely the mechanism; the product is the margin expansion for the farmer – a reality John Deere now codifies with their ‘Application Savings Guarantee’, where fees are waived if the outcomes aren’t delivered.

The pattern is universal and unforgiving: successful data monetization requires deep domain expertise to transform raw signals into business outcomes. Technology companies frequently assume data has inherent value. Industry operators know that value only exists at the point of application.

The Three Eras of Every Data Market

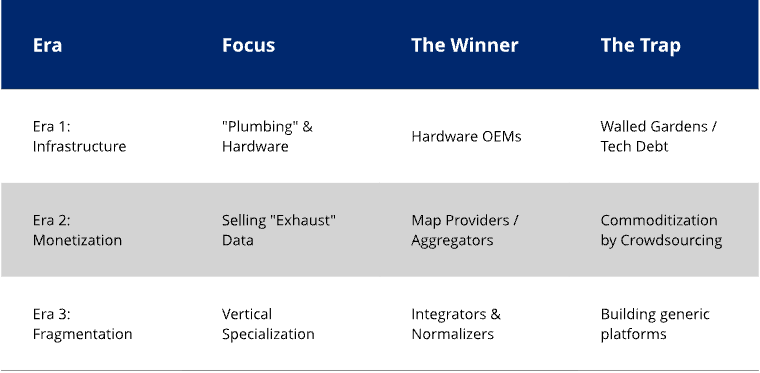

Having observed the telematics sector evolve from satellite systems costing $3,000 per unit to ubiquitous smartphone applications, we can categorize the maturation of data markets into three distinct eras [1]. This framework allows investors to identify exactly where a portfolio company sits on the maturity curve.

The Three Eras of Data Maturity

Era 1: Infrastructure Without Business Models

In this pioneer phase, the focus is entirely on “plumbing.” Companies build the pipes to extract data, often without a clear idea of who will pay for it.

In 1996, General Motors launched OnStar. It was a technological marvel, yet GM struggled to monetize it beyond basic emergency services [2]. Similarly, Qualcomm’s OmniTRACS dominated the trucking sector through expensive satellite hardware [3]. Everyone was collecting data, but the infrastructure was designed for internal reporting, not external monetization.

Critically – and we see this mirrored in today’s emerging industrial IoT markets – the infrastructure of Era 1 is often incapable of exporting data. These systems are walled gardens, designed to ingest data but not to share it, creating massive technical debt that hinders future monetization.

Era 2: The Monetization Breakthrough (and Disruption)

This era begins when a market participant successfully sells data to a third party, proving that the “exhaust” of one industry can be the fuel for another.

In telematics, this breakthrough occurred in 2005 – years before the iPhone – when a major search engine executed one of the first commercial purchases of fleet GPS data for its map team [1]. This was revolutionary. Commercial fleet data was used to power real-time traffic algorithms for the general public. The value of the data was decoupled from the original hardware.

This era created massive value events. Nokia acquired NAVTEQ for $8.1 billion to secure map data ownership.

However, Era 2 inevitably invites disruption. In the mapping sector, Waze and the proliferation of smartphones destroyed the commercial fleet data model for consumer mapping within 36 months. Crowdsourced data from millions of consumer phones rendered commercial fleet data redundant for consumer mapping [1]. The lesson is stark: generic data applications always commoditize. Specific applications maintain value. Commercial vehicle data retained niche value for truck-specific routing (e.g., height and weight restrictions), but the mass-market value evaporated.

Era 3: Fragmentation Before Consolidation

We are currently living through Era 3. Instead of consolidating into a unified standard, the telematics data market has fragmented. Specialized solutions have emerged for every vertical.

Equipment manufacturers are building proprietary “walled gardens.” Other providers are building bespoke solutions. Today, 65% of large fleets utilize multiple, incompatible telematics systems. Whoever successfully integrates across these fragmented platforms will capture enormous value, not by generating new data, but by normalizing the chaos.

The Integration Crisis: Why Operations Beat Algorithms

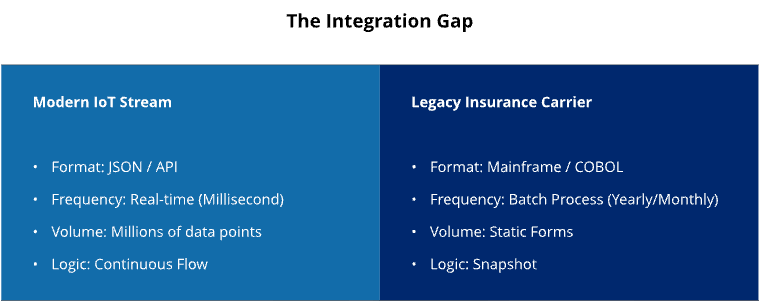

The most significant barrier to data monetization in the insurance sector is not a lack of data; it is an inability to ingest it.

As detailed in the article I wrote for Carrier Management, Why Insurance Telematics Integrations Fail, insurance carriers are physically unable to utilize the billions of miles of telematics data they theoretically have access to. This is not a failure of intent; it is a failure of architecture.

The vast majority of legacy carriers operate on mainframe systems that date back to the 1970s and 1980s. These systems operate on a batch-processing logic. They are designed to process static forms once a year. Telematics providers, conversely, deliver real-time JSON streams containing millions of data points.

These two worlds do not speak the same language. They do not exist in the same time paradigm.

The Integration Clash

This integration failure is not unique to insurance. We see identical patterns in construction, where equipment tracking systems cannot talk to project management software. We see it in agriculture, where planting data sits in a silo separate from harvesting logistics. We see it in maritime shipping, where vessel telemetry is isolated from port authority systems.

The mundane, unglamorous challenge of making disparate systems communicate defeats brilliant algorithms and massive datasets every time. Companies that solve this integration problem capture disproportionate value. They do not win through technical superiority; they win through operational excellence and “boring” infrastructure.

The Unchanging Physics of Data Value

Across two decades of technology cycles- from satellite to cellular, from 3G to 5G, from simple GPS to computer vision – certain principles of data value have remained immutable.

1. Relevance Beats Volume

In 2009, the Washington State Department of Transportation (WSDOT) conducted a landmark study that fundamentally altered the trajectory of public sector data. The results proved a counterintuitive truth: high-frequency data from a small sample of vehicles provided superior insights compared to low-frequency data from a massive fleet. This wasn’t just a technical finding; it was the commercial proof point that opened governments at all levels to utilize telematics for land use planning. The lesson remains immutable: “Signal over Noise” is what buyers pay for. More data often simply equals higher storage costs; the right data, validated by a trusted authority, is what creates a market.

2. Specificity Prevents Commoditization

Generic data always trends toward zero marginal cost. Traffic data, once a premium product, was commoditized by crowdsourcing. Weather data is provided freely by NOAA.

However, specific, high-context data retains pricing power. While generic traffic flows are free, accident reconstruction data for insurance claims commands a premium. While regional weather is free, hyper-local soil moisture data for variable-rate irrigation is worth thousands of dollars per farm annually. The narrower and more specific the application, the more sustainable the economic moat.

3. Infrastructure Enables Everything

The invisible layer is where the actual war is won. It doesn’t matter if you have a breakthrough neural network if you cannot deliver the data into a legacy client’s workflow. The vast majority of failures occur because modern, real-time JSON streams crash against the walls of 1980s batch-processing mainframes. Companies that prioritize this “plumbing” – ensuring reliable delivery and lineage – build a competitive moat, while those relying solely on algorithmic superiority find themselves with a product that no one can ingest.

4. Operations Trump Technology

Throughout every era of the telematics evolution, companies with superior operations have defeated those with superior technology.

Consider the divergence between Progressive Insurance and the first wave of “Insurtech 1.0” companies. Progressive built a highly profitable, dominant business model using relatively basic telematics data. Conversely, heavily funded insurtechs, armed with advanced AI and superior user interfaces, lost billions of dollars.

The difference was not the technology; it was the operations. Progressive possessed the “scar tissue” required to manage claims, handle exceptions, and navigate regulatory environments. Technology without operational excellence is merely an expensive way to fail faster.

5. Trust is the License to Operate

Telematics data markets are about trust. Without trust, customers won’t give permission for you to sell the data. Without trust, no one is going to sell you their data. Companies have to trust you with their data.

Years ago, a leading telematics service provider sold their customer data and it was used by local law enforcement to set speed traps. That destroyed trust.

On the subject of trust, the old banking rule of “know thy customer” applies to anyone selling raw telematics data.

6. The Value Exchange Must Be Explicit

Customers inevitably ask: “What is in it for me?” It is their data; the technology company is merely the custodian. There must be a clear, tangible benefit for customers to grant permission for monetization.

This principle was crystallized at an industry conference where a Walmart executive stated they would be willing to share their proprietary logistics data – but only if it resulted in better roads and more efficient infrastructure usage. The trade was explicit: data for efficiency. Without that clear return on assets, the data remains locked.

7. The Prime Directive: Protect the Core

Whatever you do in the data business, you must always protect the core business that generates that data. This is the prime directive.

If your data monetization practices alienate your core customers, you will lose those customers. If you lose the customer, the data stream evaporates. You cannot build third-party products from a client base you have destroyed. There is no data market without a healthy product market to sustain it.

The Patient Capital Advantage

The realization that hardware and deep tech require long time horizons is now well understood. The market must now apply that same logic to data monetization.

The telematics market proves definitively that data maturity takes time. Between 2021 and 2024, the number of active investors making multiple deals in the insurtech space plummeted by 72%, dropping from 406 to just 113. This was not a cyclical dip; it was a structural correction.

Venture Capital funds discovered a fatal mismatch. Insurance transformation requires a 7-10 year horizon to accumulate sufficient data for actuarial credibility. You cannot rush the “law of large numbers.” However, VC funds operate on 10-year lifecycles, requiring exits within 5-7 years. The math simply does not work.

The Capital Timeline Mismatch

This capital timeline mismatch creates a fatal internal conflict. In the early stages of a data market, there is a fierce struggle for resources inside the producers of raw telematics data. The companies generate an enormous amount of data, but it is not central to their core business. Consequently, data teams struggle to get on engineering schedules to produce data in a form that can be used by third parties.

Timing matters. There must be enough buyers in the market to justify this internal investment. While some data markets, such as telematics and sensor data for traffic analytics, are large enough to support robust trading, others remain niche. Truck drivers need truck specific data – routes restricted by height or weight – but the market for this type of data is small. There simply are not enough buyers to support everyone who could sell this raw data.

If data markets don’t develop fast enough, management may pull the plug on what they consider to be a side business. Some data markets will simply not become large enough to justify the internal investment in supplying them. Some observers assume data guarantees profit. But innovators often build products nobody wants. This is an opportunity for patient capital.

This retreat has created an unprecedented opportunity for family offices and permanent capital structures. Unlike VCs, who are forced to manufacture growth to meet artificial fund timelines, family offices can align their capital with the reality of the industry. They can support companies through the necessary “Valley of Death” – the infrastructure building , the data accumulation, and the market education – to reach the scale achievement phase.

This patient capital thesis applies to every IoT data market:

Industrial IoT: Platforms need 5-7 years to achieve critical mass and displace legacy systems.

Smart Cities: Applications require multiple municipal budget cycles for adoption.

Healthcare Data: Platforms face 3-5 year regulatory approval processes before monetization begins.

Agriculture: Solutions need multiple growing seasons to prove ROI to skeptical operators.

The winners of the next decade will not necessarily be the companies with the most data or the most advanced algorithms. They will be the companies with capital structures that are aligned with the physical realities of their markets.

Actionable Advice for Data-Rich Ventures

If you are an operator sitting on sensor data – whether from robots, industrial equipment, or scientific instruments – the lessons from the telematics sector provide a clear playbook for monetization.

1. Find Your Outcome, Not Your Data Product

Stop organizing your business around selling data. Organize it around selling outcomes. Ask: What specific, measurable business result can we enable? Frame every product decision around that financial impact. If you cannot draw a straight line to a P&L improvement, you do not have a product.

2. Choose Depth Over Breadth

Resist platform ambitions in the early stages. The companies that try to be “the operating system for everything” invariably fail. The companies that solve specific, excruciating problems for specific industries succeed. It is far more profitable to own 80% of a niche vertical than 2% of a broad horizontal market.

3. Invest in the “Boring” Infrastructure

While competitors chase AI breakthroughs and press releases, invest in reliability, integration, and data governance. The unsexy foundations determine long-term success. The ability to integrate with a 1980s mainframe is a more valuable competitive advantage than a marginally better neural network.

4. Partner With Domain Experts

Technology alone never wins. You need industry insiders who understand the “hidden wiring” of the sector – the workflows, the regulations, the buying processes, and the politics. The marriage of technical capability and deep domain expertise creates true defensibility.

5. Structure for the Long Game

If your capital structure requires an exit in 3-5 years, you are already disadvantaged. Data markets require patience. Align your funding sources with your market’s maturation timeline. Do not take VC money for a Permanent Capital problem.

6. Stress-Test Your Data Viability

As a data market matures, producers of raw telematics or sensor data must ruthlessly determine the long-term viability of their asset before building the pipes to sell it. You need to map how pricing and market size will evolve.

The critical questions to ask are: Are there low-cost data substitutes? (If yes, margins will collapse). How specialized is the production? (If generic, it will commoditize). Will the number of buyers increase? (If you are selling to a market of one, you do not have a business; you have a hostage situation).

7. Respect the Supplier Saturation Point

If you produce raw telematics data, you must analyze the supplier capacity of the target market. Some data markets will grow and become robust, but they will structurally only need a limited number of suppliers to function. Once that capacity is filled, the window closes. If you miss the window, you must look for other markets.

Aside from your analysis, how are you supposed to determine what a market will look like? That is when you must remember the innovator’s reality: you face both the risk of product-market failure (no market demand) and the risk of poor timing (market saturation). You must distinguish between the two before you invest.

8. Navigate the “Shadow Phase” Strategically

In the early stages of a data market, real liquidity is silent. While the hype cycle produces press releases, the revenue cycle produces NDAs. This “Shadow Phase” is a feature, not a bug. Suppliers are testing the waters without alerting core customers to a monetization strategy that hasn’t yet proven its value. Suppliers require secrecy to test monetization without alarming their core customer base, while buyers demand it to hide their strategic roadmaps. If you interpret market silence as a lack of demand, you are misreading the room. By the time a data partnership is announced in a press release, you have already missed the early entry point.

9. Sequence Your Talent Stack Correctly

A common failure mode is hiring a Data Scientist when you actually need a Data Engineer. You must distinguish between the roles: Data Engineers build the pipes (moving data); Database Administrators (DBAs) maintain the storage (keeping the lights on); Data Scientists refine the product (extracting value). Do not hire a PhD Data Scientist to build your ETL pipelines. They will be frustrated, expensive, and ineffective. You cannot manufacture a product from inventory you cannot access. Build the logistics infrastructure (Engineers/DBAs) before you hire the alchemists (Scientists).

The Next $400 Billion

The telematics market reaching $400 billion is not the end of the story; it is the prologue.

Every sensor being deployed today, every IoT device being connected, and every data stream being generated is following the same evolutionary path that telematics blazed. Industrial IoT, smart cities, healthcare monitoring, and supply chain tracking are all in various stages of the same three eras.

The question is not whether these markets will mature – they will. The question is whether today’s innovators will learn from two decades of expensive lessons or whether they will pay the tuition again.

For those willing to study history, to understand market physics rather than just technological possibilities, and to execute with operational discipline, the opportunity is unprecedented. The data revolution isn’t coming; it is here. But value will not flow to those with the most raw inventory. It will flow to those who understand that data monetization is ultimately about transformation, not collection.

After 20 years of building in this space, one lesson stands above the rest: The winners aren’t the ones selling the data. The winners are the ones delivering the outcome.

What Comes Next

The laws of data physics we identified in telematics are not unique to transportation; they are universal. Yet, we see the same expensive architectural and business model errors currently being codified into the foundations of Industrial IoT, Smart Cities, and Digital Health.

This series continues by applying this operational lens to those emerging markets, distinguishing the inevitable winners from the infrastructure that will be rebuilt at a loss.

Endnotes

[1] Indenseo Research and industry analysis, 2025.

[2] Radius Payment Solutions. The History of Telematics. Radius Payment Solutions, 2023.

[3] Webfleet. The history of telematics: from the 1960s to today. Webfleet, 2023.

Author Note: This analysis draws on publicly available academic research, industry data, and regulatory filings. Statistics are cited to primary sources where available.

AI Disclosure: Research compilation utilized AI tools to discover and verify publicly available data sources and citations. All analysis, interpretation, and conclusions are original work.